Recently I had to make a rushed trip to visit my Dad up in Ohio. I knew I was going to be there at least a week and that I’d have to get some work done. The dilemma I faced was that while Dad is tech savvy all he has available at his house these days is wi-fi and an iPad. Without realizing it, Dad has fully embraced ‘the cloud’. Once he got the iPad figured out he never looked back. It’s stuck to him like an appendage.

Faced with having to take some technology with me I was unsure just what I should bring. I don’t have a personal Windows laptop and the one my employer makes available is so tightly locked down it’s about as useful as a boat anchor in the Sahara. My Galaxy Tab S is a very capable tablet, but it doesn’t offer the kind of screen real estate I felt I’d need to get serious work done.

Thinking about it for a few minutes I realized that most of what I would need to do can be accessed via the web. I conduct just about all of my personal business via a browser interface these days – email, banking, managing my blog sites, etc. About the only thing I turn to a locally installed application for is ESRI’s heavyweight ArcGIS for Desktop environment and Microsoft Office, and if I need to do a remote desktop connection into one of our GIS servers I need to pull up the Windows RDC interface.

At work we are slowly but steadily moving much of our GIS environment to cloud-based services. We are heavy users of ArcGIS Online and are starting to dabble in Amazon Web Services. We are also trying to gently nudge our engineering staff over to some of AutoDesk’s web-based CAD tools like AutoCAD 360 and to web-based document management solutions.

In reviewing my requirements it became apparent that to get work done all I’d need is access to a web browser. The idea of a Chromebook running the Chrome OS (operating system) popped into my head. Actually, the idea had been residing there for some time. I just needed the excuse to go buy one.

Some background:

My wife’s school system moved off of the heavy iron IT model two years ago. They abandoned most of their Windows Server and Microsoft Office environment and moved to Google’s web-based education services environment. Escalating IT costs were putting an unsupportable strain on the school system budget. The move to Google services has been a godsend. Google charges a small annual fee for each account holder in the system (teachers, students and administrators all get their own accounts), they get access to the full suite of Google applications and services and Google manages the environment.

I serve as my wife’s shadow IT support for work related issues. In the old Windows Server/Windows XP/Windows 7 days life was hell. Something was always going wrong and I was always getting calls at work and making trips to her classroom to help troubleshoot hardware and software problems. Roberta is pretty darned tech savvy herself (at one point she served as a Windows NT desktop administrator at her old school in Germany), but she’s a classroom teacher, not an IT tech. She was simply overwhelmed by the number and complexity of the computer issues she faced. She needed to focus on teaching, not troubleshooting a crappy IT environment.

Since the school system moved to Google services the only IT-related trip I’ve had to make to her classroom was to make sure her printer was properly hooked up to her legacy Windows desktop.

The other thing her school has done is scrap the old Windows desktops and laptops in favor of lightweight (and cheap) Chromebooks. At first there was a lot of skepticism about whether a Chromebook could handle the computing needs of the average elementary school student. The school got a grant and bought a small test batch of HP Chromebooks. It turns out the Chromebook experience has been so good that the school is planning to expand it across all grades. The kids have taken to the Chromebooks like ducks to water, there is virtually zero admin overhead. Just open the Chromebook, log in and go! With the State of Georgia’s move to on-line standardized testing the ability to place an inexpensive, easy to use and easy to administer laptop on every student’s desk will become critical over the next few years. Chromebooks seem tailor made to meet that need.

So back to my requirements. It’s clear I’d already been thinking about a Chromebook for my own use, but I had the same concerns my wife’s school had – is the Chromebook capable enough to handle my temporary computing needs? The only way to find out was to take the plunge. The night before I headed up to Ohio I ran out to Best Buy and bought an HP Chromebook 14. This is one of the newer models with the Nvidia Tegra CPU, 2 gb of system memory, 16 gb of RAM and a 14″ HD display. My specific use and test goals included:

- Overall hardware performance – system speed, storage capacity, wi-fi connectivity, external device connectivity, etc.

- Managing my organization’s enterprise ArcGIS Online site

- Managing my email and calendar from my organization’s Exchange server

- Managing business related Microsoft Office documents

- Managing my personal business needs – Gmail, my personal Microsoft Office 360 account, banking & finance, this blog, and more

- Entertainment – watching Nexflix or YouTube videos

- Stressing the system – can all of this be done with all tabs open at once in a single Chrome browser instance?

So how did it do? Overall I was fairly pleased with the whole Chromebook experience. I very clearly understand the strengths and weaknesses of a browser-based operating system so I didn’t try to do anything that the Chromebook can’t support, like trying to install Adobe Photoshop. The few weaknesses I uncovered were either hardware based or were easy to find work-arounds for. Let’s take a look at some of my observations.

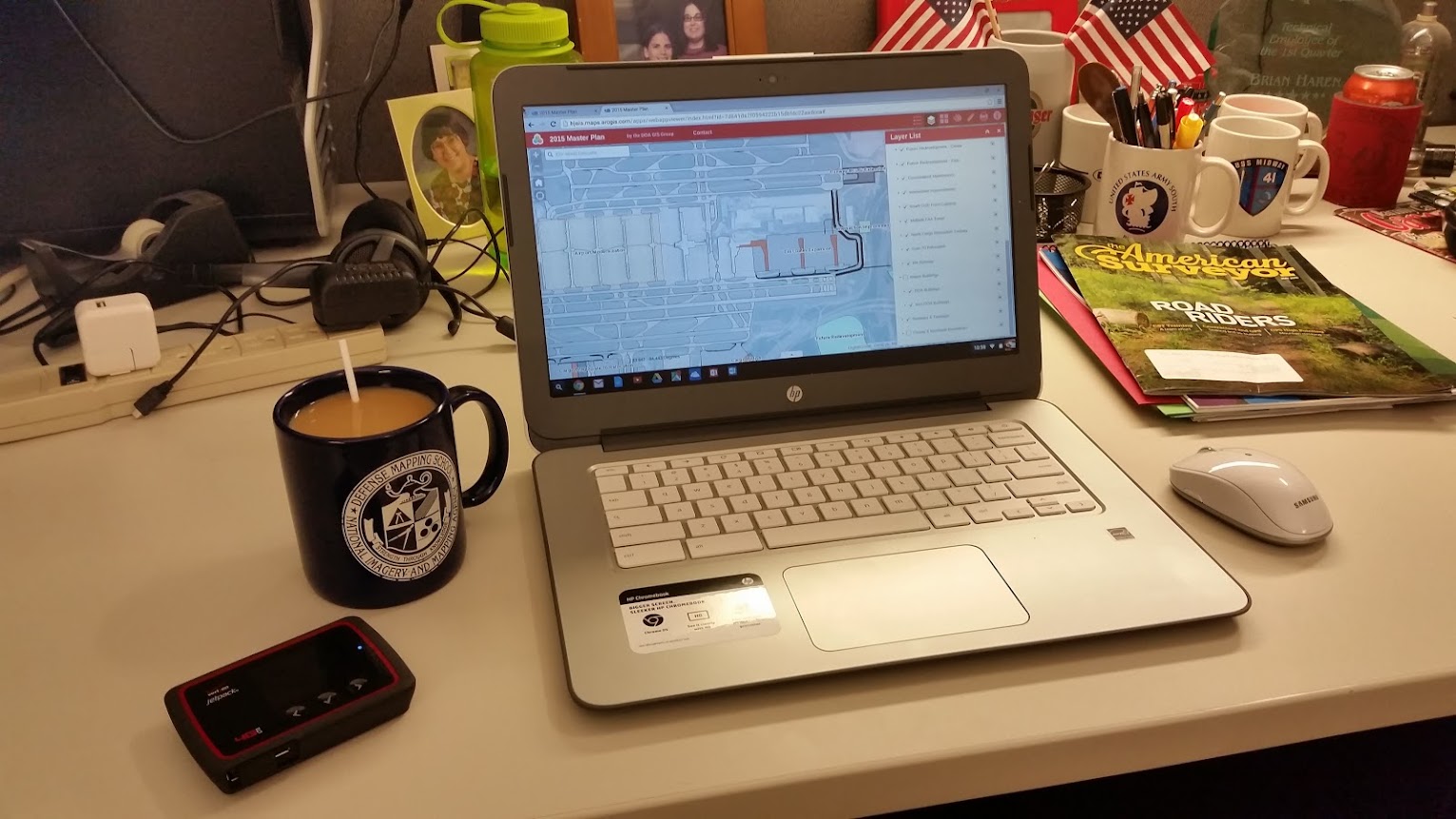

A Chromebook and a wi-fi hotspot (and a cup of coffee) make a great combination for getting some work done in ArcGIS Online

The Good

- Chrome OS stability is very good. I had just one browser ‘freeze’ early on while I was setting up the system and Chrome was doing an update. Since then there have been no stability issues

- The Microsoft One Drive and Office apps for Chrome are very well implemented. I’d heard that Microsoft put a lot of time and effort into building out these apps for both the Chrome and Android environments, and it shows. MS Office compatibility was one of my big concerns when going to the Chromebook, but my experience so far has been good. Now, I haven’t tried to open and edit a scientific paper with a lot of special formatting or tried to convert Tolstoy’s War and Peace from Russian to English using the Word translate function,but for the few lightweight documents I’ve created or edited it’s worked just fine

- While the Chrome Web Store (similar to the Google Play Store for Android) seems to be well stocked with apps, keep in mind that many of these ‘apps’ are little more than shortcuts to web versions of certain products. I was pleased to find Microsoft Office and OneDrive apps – Word, Excel & PowerPoint that can tie directly back into my Microsoft Office 365 account.. Sorry, no Access. Skype seems to have been integrated into the Outlook app, but since I don’t use Outlook for personal business I didn’t get a chance to test it.

- ArcGIS Online management. I was pleased to find that managing my enterprise and personal ArcGIS Online environments was as easy on the Chromebook as it is sitting behind my GIS worstation back in the office. With one very minor exception – the uploading of thumbnail images to GIS service properties pages – the ArcGIS Online management experience is the same on this inexpensive Chromebook as it is on a $4,000 top-of-the-line desktop workstation

- Video performance is first rate. Videos from YouTube and Netflix played smoothly with no dropped frames of audio issues

You may wonder why all the emphasis on Microsoft Office in the above comments. The reason is simple. While Google thinks its Google Docs suite will conquer the world the truth is that most of corporate America is still tightly wedded to Microsoft Office. For a Chromebook to be considered a serious replacement for a corporate laptop it must provide robust Microsoft Office compatibility.

What can you do in ArcGIS Online with a Chromebook? Build a Story Map! While in Ohio I visited some old favorite locations and built out this web map using ESRI’s Story Map template. The Chrome OS handles the ArcGIS Online interface just fine. You can click on the image to launch the map

The Not-So-Good

Most of the negative issues seem to be closely related to the HP hardware and it’s impact on the Chrome OS performance. Let’s have a look:

- 2 gb of system memory is not enough. When more than four browser tabs are open performance starts to suffer. Even worse, there are some apps (Norton, the Ad Block app and others) that when installed will run in the background on every tab. These background apps absolutely kill system performance. My recommendation is to simply not add any of these to your Chromebook, but the long term solution is a Chromebook with more system memory. The next one I buy will have at least 4 gb of system memory

- Why no delete key? Chromebooks don’t have Delete keys. Really? Like I never make mistakes and have to delete anything

- Overall system performance is highly dependent on the quality and speed of your wi-fi connection. A lousy internet connection = a lousy Chromebook experience. Google touts that some Chrome OS features like Google Docs can be used off-line. My limited experience tells me that this an iffy proposition that highlights another hardware shortcoming…

- 16 gb of RAM is too paltry. The system files take up almost half of that right off the top, leaving a pitiful 8 gb to store all of your off-line treasures. This situation can be mitigated somewhat by using a MicroSD memory card, assuming your Chromebook accepts one. The real answer is for manufacturers to bump up the system RAM to 32 gb

Neutral Observations

These are neither good points or bad points, just observations on software and hardware performance:

- HP build quality is very good. Although the construction is all plastic the deice is sturdy and well put together. Battery life is also very good, offering slightly over 6 hours of continuous use

- When the Chromebook is closed (but not shut off) battery drain appears to be reduced to near zero. It’s amazing that I can close this thing while it is still running and come back days later and there’s been almost zero battery drain

- If the Chrome OS is secure from viruses and malware, as Google claims, why does Norton offer a Chrome-specific protection package?

- No Google Earth. This highlights the key limitation of the Chrome OS. You can’t run installed applications on a Chromebook. As I mentioned above, the ‘apps’ you add to your Chromebook via the Chrome Web Store are little more than shortcuts to web sites that are highly configured to run in Chrome OS. You can’t install a stand-alone application in Chrome. Since Google Earth requires a ‘client side’ install this means no Google Earth on Chromebooks. So even Google can’t give you access to everything they offer

- You can’t park icons or shortcuts on the ‘desktop’ because the ‘desktop’ isn’t really a ‘desktop’. It is just a holding space for the browser window to occupy. Old time Windows users will find this very frustrating. You can park shortcuts and icons on the taskbar at the bottom of the screen, but the rest of the ‘desktop’ is merely a vast, open and unused space until you open a Chrome browser

- With the maturation of HTML5 and Java developers are pushing more and more functionality into the web browser, which places more demand on the ‘client side’ hardware. I wonder just how well Chrome OS will perform in the future, particularly on limited hardware, as developers bring more and more processing complexity into the browser technology

- Personally I find this 14 inch Chromebook too big. Don’t get me wrong – it works great, but as a ‘grab ‘n go’ laptop it’s just too large. I’m keeping my eyes out for a 11 or 12 inch model with the right combination of processing power and battery life

So is the Chromebook a viable substitute for a full-up laptop? No. If you need to run installed applications (like ArcGIS for Desktop, or Adobe Photoshop, or even Google’s own Google Earth) or then you will need to stick with Windows or MacOS. However, when working within the well understood limitations of the Chrome OS environment I found the experience pretty darned good. Let’s just say I’m a fan. In fact I’m already thinking about my next Chromebook purchase. A nice robust 12″ model with a fast processor, 4 gb of system memory and 32 gb of RAM and 8 hours of battery life. Price it under $300 and I’ll be in line to buy one!

– Brian